Mike Zullo’s investigation into the authenticity of President Obama’s birth certificate has been plagued by inexpert testimony. First Zullo presented image analysis by people who didn’t know what they were talking about. Next he presented contextual criticism of the document based faulty memory, lack of expertise on vital records and a falsified historical document. He accepted the results of investigators who knew nothing about vital records in his debunked certificate numbering scheme. Finally Zullo found a real expert, a handwriting analyst, who seemed to agree with Zullo, but who had no known background in the field of electronic documents and high-end compression algorithms. (The Reed Hayes report was never shown to the public.)

Most recently in Zullo’s December 15, 2016, attempt, he seemed to be making a statistical claim, even though his sources were not qualified by Zullo as statisticians, and Zullo’s refusal to disclose the methodology and analysis used confounds peer review.

What I will do in this article is talk in general about a statistical fallacies that may underlay Zullo’s argument, and then in Part 2 present my own experiment and analysis.

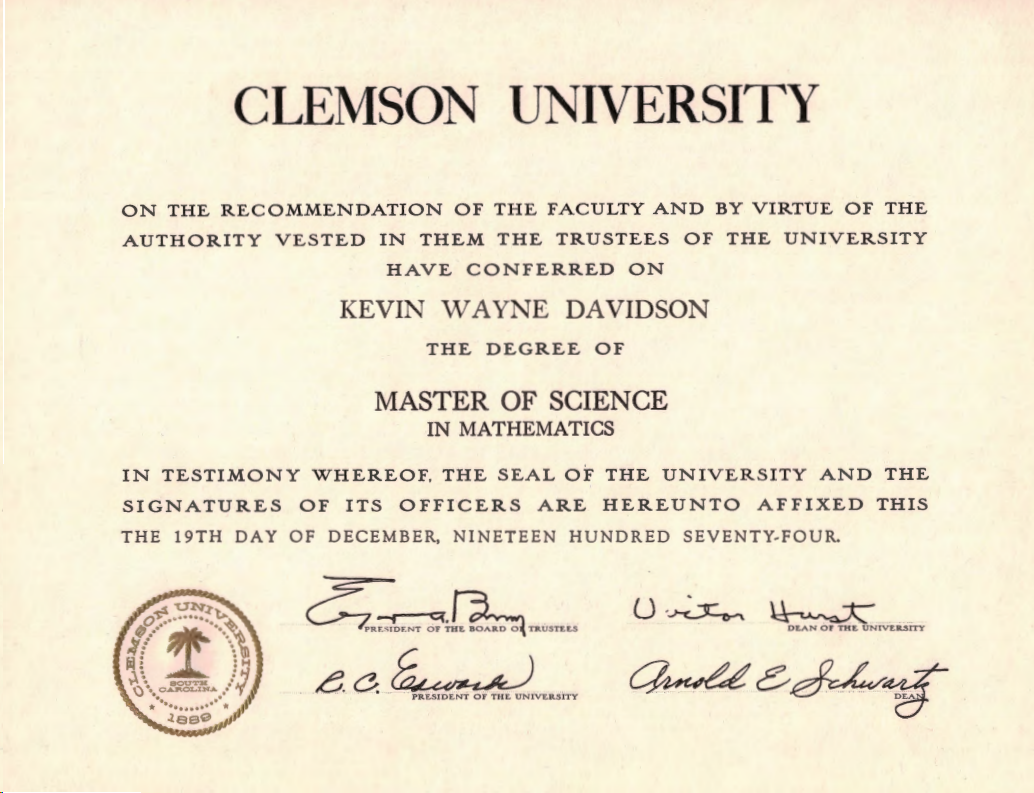

Debunking many birther claims is within the reach of the non-expert. If a birther says “X” is impossible, it is only necessary to show an example of “X” to prove it false. This business of the date stamp angles is going to require some expertise. Plausible–sounding statistical arguments can be wrong. As I frequently say, “I am not a real doctor, but I have a Masters Degree, in Science!” For this debunking, I am going to play the expert card, my MS in Mathematics from Clemson University.

Fallacy

The week I was born a man made 28 passes in a row at a Las Vegas dice table, something said to have only one chance in ten million of happening. Remarkable? The fact is that millions play dice every year, and that when something like this happens, it makes the newspapers. If an experiment is tried enough times, then unlikely outcomes become likely to occur. Ignoring the number of trials is the fallacy. Unusual events pique our interest, but they should not surprise us. These things happen every week.

A great example is the Pick 3 lottery number winner in Illinois the night after Obama was elected President: 666. What are the odds of that? Would you say “one in a thousand” (.001)? Notice that the event happened the day after the election, not on the day of the election. If it happened on the day of the election, the same claim of oddity would have been made. So isn’t the question better answered “what is the probability that 666 would come up within two days of the election?” So the .001 probability becomes .001999. But wouldn’t an anomaly have been declared if the number came up on Obama’s birthday? Inauguration day? The day the Electoral College voted? And would a claim had been made if the number came up in Hawaii’s lottery? If a longer winning number started or ended with “666”? The question becomes: “what is the probability that 666 could come up in some context related somehow to Barack Obama over some period of time?” There are many significant Obama events and things that can be coincidental with them. So what the actual question is: if you look at every detail of Obama’s life and every item coincident to it, what is the probability that a few odd things will be found? (And what about my 666 watch story?)

Another statistical fallacy, the prosecutor’s fallacy, was made by Christopher Monckton’s when he used calculations of the improbability of a combination of anomalies he thought were in Obama’s birth certificate as evidence that it was a fake.

At its heart, the [prosecutor’s] fallacy involves assuming that the prior probability of a random match is equal to the probability that the defendant is guilty.

For instance, if a perpetrator is known to have the same blood type as a defendant and 10% of the population share that blood type, then to argue on that basis alone that the probability of the defendant being guilty is 90% makes the prosecutor’s fallacy (in a very simple form).

— Wikipedia

His case was further undermined by the fact that his anomalies weren’t anomalous and that he used calculations for independent events, when the events were correlated.

Improbable events occur in our lives all the time. What were the chances that my wife visiting Kiev (population 2.8 million) in the Ukraine would have a chance meeting on the street with another graduate of Auburn University, when neither of them was wearing any emblem of that school? Do the math!

Here’s another example: The old philosophical problem of proving a negative. The example is the proposition, “all ravens are black.” It can’t be proven because it is false, but white ravens are rare. What is the probability that I would come across not one but three of them? If I decided to go looking in my back yard, the answer is “extremely small” (I don’t get ravens at all), but if I went looking for “white raven” in Google Images, not so unusual. And to tweak the result further, in all honesty I wasn’t looking for a set of three when I started out. I changed the rules after the fact.

Here’s another example: The old philosophical problem of proving a negative. The example is the proposition, “all ravens are black.” It can’t be proven because it is false, but white ravens are rare. What is the probability that I would come across not one but three of them? If I decided to go looking in my back yard, the answer is “extremely small” (I don’t get ravens at all), but if I went looking for “white raven” in Google Images, not so unusual. And to tweak the result further, in all honesty I wasn’t looking for a set of three when I started out. I changed the rules after the fact.

The story has gone around that college math professors get extra income by betting their classes that at least two people in the class will have the same birthday. Would you take that bet? Let’s do the math:

We’ll pick our first student and then compare that one to each of the others. Each student we compare has a 1 in 365 chance of matching, and a 364/365 chance of not matching. For the next student there are 363 dates that won’t match the first two, so the chance of theirs being different is 363/365. One multiples the two fractions together to get the compound probability of the teacher losing the bet for two students. The amazing result is that at 23 students, the odds are roughly 50/50 that there will be a match. For a class size of 30 the professor has a 75% chance of winning and with a class of 100, the chance of the professor losing is about one in a million. The object of the story is that improbable events are likely to occur in large samples.

The same mistake, ignoring sample size, leads to false identifications associating two online personages. What are the chances that two different people posted photos from the same PhotoBucket account and live in the same state, and have initials “RB.” I don’t know the odds, but there are two.

Let’s bring the examples a little closer to home. Let’s say I have a Hawaiian birth certificate, and an instance on one form where two characters have a certain spatial relationship, and then find another form where the two letters are in exactly the same relationship to each other. Let’s say that through some argument (that may be fallacious) you determine that the odds are one in a thousand that pairs would correspond. Have I found a very unlikely event? The answer is no for at least two reasons. First, I picked the pair after I found the correspondence. There are 196 typed characters on Obama’s birth certificate, yielding 19,110 pairs of characters to compare. So finding a one in a thousand event in a sample of 19,110 is not unlikely at all; it’s almost inevitable (better than the one in a million in our birthday example). The second error is assuming that the positions of the characters is independent. In fact a typewriter is designed to consistently put characters in exactly the same relation to other characters, line by line, day by day, in a grid that is 6 lines per inch vertically and 10 characters per inch horizontally. So rather than compute a probability assuming that the spacing is random (events are independent), we should be asking the question of how probable is it that these two character pairs are in the same relative position given that they were typed on the same model of typewriter (and based on font analysis, it appears that the same model Kapi’olani hospital typewriter typed all of its birth certificates), and likely the same typewriter.

Let me emphasize that we do not know anything about the methodology, analysis or assumptions made in the reports that Zullo talks about, but refuses to release. They may be sophisticated or naive, but they are almost inevitably wrong unless you assess the probability of a massive mutigenerational cover-up of the facts of Obama’s birth involving multiple administrations of both parties in the Hawaii Department of Health, 1961 Honolulu newspapers, and numerous White House staffers and the President of the United States as having a probability greater than one in a thousand. I doubt that we will ever see the analyses from Mike Zullo. I cannot critique what I haven’t seen and the confidentiality of his relationships with his experts is a screen that Mike Zullo hides behind.

What I do know is that the analysts Zullo consulted did their work based on samples that Zullo supplied, samples that could have been selected to skew the outcome. For example, we know that Reed Hayes was given the White House birth certificate PDF to look at, while not being shown the photographs of it, photographs that call into question his conclusions that no paper document existed. Hayes naively saw the pixelated portion of Stanley Ann Dunham Obama’s signature as proof that the signature was done in two parts, when in fact this was Xerox layer separation artifact that Hayes would not have seen had he been given other images of the birth certificate to work with. Was ForLab shown all of the date stamp samples in my article, or was the very close 1959 date angle omitted, and the very different Nordyke certificate, stamped by a different clerk, included?

Stay tuned for Part 2 where Doc gets his hands dirty with a real experiment.

Monckton’s mathematical illiteracy particularly grated me, because he should be smarter than that, and yet WND and the like took his nonsense math seriously. He just assigned odds to ‘things that happened’, multiplied them together, and trumpeted the low overall probability that his calculation spit out.

But this is like rolling a die a dozen times, recording each number, and then Monckton looks at your 12-digit series of numbers and declares “Those results must be faked! There’s less than a 1 in TWO BILLION chance that you could have rolled that series of numbers!”

That’s well stated, Loren.

Kevin is doing a great thing here. However, the problem with a detailed mathematical analysis is that it will fly over the head of the average person.

But let’s look at your example.

A person rolls a die 12 times, and gets the following result:

3, 5, 2, 1, 1, 6, 4, 3, 4, 6, 2, 4.

It’s absolutely true that she only had a 1 in 6 chance of getting a 3 on the first roll.

She also only had a 1 in 6 chance of getting a 5 on the second roll.

The odds that she would get a 3 and a 5, in that order, are 1/6 times 1/6, or 1/36. One in 36.

Likewise, her odds of rolling 3, 5, 2, are 1/6 x 1/6 x 1/6, or 1 in 216.

When you continue in this fashion, you find that the odds of rolling the exact sequence she rolled are 1 in 2,176,782,336.

Or, as you said, less than 1 in 2 billion.

But you could say that of ANY sequence of 12 rolls of the die.

This is basically what both Monckton and Zullo do, except with Zullo it’s even worse. He claims that elements were copied from one birth certificate to the other, except that if items are digitally copied, they generally have to be pixel-by-pixel identical.

If they aren’t pixel-by-pixel identical, then there has to be some explanation for why not.

Not only are Zullo’s images not pixel-by-pixel identical, they are pixel-by-pixel completely different.

It’s not even at all clear that they are, in fact, in the same position.

In fact, the great majority of them are quite clearly NOT in the same position.

This immediately proves (yes, PROVES) that the vast majority (at the very least) of his claims are FALSE.

Only one or two of Zullo’s claims are even plausible, in the sense that they at least appear to be possible. (And this ignores his complete lack of any explanation for why the images in those one or two cases are NOT pixel-by-pixel identical.)

But you can make plausible claims regarding just about anything.

It’s plausible that trader jack assassinated JFK.

It’s plausible that he murdered both Georgette Bauerdorf and Elizabeth Short. After all, he admits to having been in California at the time of both murders.

It’s plausible that JFK escaped assassination by having a double ride in the limousine in Dallas, and lived out the rest of his life in secret in Norway.

It’s plausible that Fidel Castro is Paris Hilton’s real father.

All of the above scenarios, and a vast many more, are plausible. There’s no compelling evidence for any of them, however.

Perhaps the greatest birther fallacy is to take that which is plausible, for which there is no real evidence, and argue that plausibility equals evidence. It doesn’t. Not in the slightest.

However, they do this for whatever fantasy they have, that they would like to be real. “Because it appears possible that this could have happened, and because I really want it to have happened, it happened.”

No, it didn’t. You simply wished for something.

I can wish for a pet unicorn all day long. After all, people keep talking about them, so they must exist somewhere, right? As the birthers are fond of saying, “Where there’s smoke, there’s fire.” Surely people wouldn’t keep talking about unicorns if they didn’t exist somewhere.

But that’s another fallacy in itself.

And no matter how hard I wish, it doesn’t mean I’m going to get a unicorn for Christmas.

Pete

Well said. The basest logical fallacy that Birthers commit is begging the question. They start with the assumption that Barack Obama was not born in Hawaii therefore no authentic birth certificate could exist.

If you listen to Zullo on the Hagmann report you can pick this out as plain as day. Zullo tried to explain how the copy and paste theory originated. Zullo had staged two press conferences and the evidence had been shown to be either obviously false or easily explainable by reasons other than some sinister conspiracy.

An honest person would stop and say well we tried for two years but you know this thing looks real. What did Zullo do? He said we knew we had to look in another direction, Now that sounds an awfully lot like someone who had already made their mind up and was going to take as it turned out the rest of Arpaio’s term in office to come up with anything that he could use to make a lame claim that Ah’Nee’s certificate was somehow a source document.

Any real scientist or investigator will tell you that assuming the conclusion and working backward is usually a bad idea and can lead to an embarrassing outcome. Even wanting really badly for something to be true can bite you. Just ask Professors Pons and Fleischman how that worked out for them.

Now that doesn’t mean you shouldn’t have an opinion about a conclusion. For example, if I had decided I was going to set out to prove that Einstein’s General Theory of Relativity is completely wrong I should have the knowledge that there is an overwhelming chance I am wrong. That’s why in real science extraordinary claims require extraordinary evidence. And that’s why standard recognized methodologies and a peer review process exists.

In our legal system the assumption is that government issued documents are valid without real evidence to the contrary. There is a reason it was so important it was actually included in the Constitution that government issued documents are one of the few exceptions to the hearsay rule. For commerce to run efficiently this was necessary.

Government bodies like Hawaii are asked to hold up their end of the bargain by maintaining accurate records that can only be accessed by authorized people . They do a very good job at that. A successful forgery of a government issued document is rare. A forgery validated by the issuing authority is unheard of.

In other words Zullo is lying.

To return to Pete’s point on plausibility, Zullo likes to carp on the birth-certificate fraud that occurred in New Jersey and Puerto Rico, and of course the infamous birth certificate of Sun Yat-sen issued by the Territory of Hawaii.

Those incidents of fraudulently issued birth certificates (and not forged ones, as Zullo is insinuating that occurred with President Obama) prove only that fraud is plausible because it has happened previously. Those incidences, however, in no way show that it is probable that fraud (or forgery) occurred when Hawaii issued President Obama’s birth certificate. Because there is no competent evidence of fraud (or forgery) occurring with respect to President Obama. All there is is the lies, rumors, innuendo, gossip, and speculation of dead-enders and bitter-clingers who have wasted so much of their lives over literally nothing,

This is sheer idiocy.

I mean, really. It’s the classic recipe for confusing whatever fantasy you like with reality.

Which, of course, is what birthers have done for the past 8 years.

OTOH, maybe they’re onto something here.

Jessica Alba has the hots for me. I know this because I’ve assumed it to be true.

But there’s good evidence for it. I mean, look at her latest two tweets:

“Definitely one of the most surreal moments of my life- This sweetie pie young officer drove by outside my friends… ”

And…

“Love you babe! Last nights Pajama jammy jam was a blast! Love celebrating you with an epic pizza pajama game… ”

Did you catch those phrases? “sweETiE Pie”? “EPIC PIZZA?”

She’s saying “Pete.” “Pete.” In every tweet she writes. It’s all encoded, right there.

And did you catch the “Love you babe!”?

It’s 1000% proven. Jessica Alba LOVES me!

I get a kick out of how birthers still refer to that cut-rate Marty Feldman wannabe as “Lord”, long after his claim to that title was discredited, all the while insisting Obama’s bonafides are fake.

I like how birthers express grave concern about President Obama’s purported foreign allegiances, but have no problem relying on foreign authorities, like this “Lord,” a disbarred English barrister, and some business in Italy.

My Jessica Alba fantasy illustration, actually, is precisely what birthers (including but not limited to Zullo) have done.

Now, to be sure, there were a large number of birthers. Only one of them originated the fantasy – “Jessica Alba has the hots for you.” The rest of them simply latched onto it.

They then spent 8 years looking for whatever remote happenstance they could claim to be evidence to support the idea.

And, as demonstrated above, if you assume something, it’s not hard to find some bit of nonsense that will “support” it.

The thing that amazes me is that there are people like scott e, who will at least appear to cling to the fantasy when every. single. bit. of “evidence” that has been brought forth over 8 years has been shown to be as much nonsense as Jessica Alba’s “Pete” tweets.

I’m not sure whether it’s possible for stupidity to be “world class,” but if it is, birthers have earned the title. Particularly those who’ve been active about it, not just casual.

Except that in so many cases, it wasn’t just stupidity. It was active dishonesty. They simply found it to be in their interest to promote false claims.

I think the ones like scott e were so emotionally, and probably financially, invested into the birther lies, that they desperately need to feel like they got something out of it. Even if it means just clinging to an empty “Well, something doesn’t add up, and Zullo was able to prove it! Even if no one takes it seriously.” A no “win” is too small philosophy, if you will.

As I recall, even before the final “conference”, P&E was settling for “Nothing is going to happen to Obama, but the people will at least know the truth!” sort of mantra.

At this point, it is painfully obvious that the few remaining birthers still believe because their egos can’t admit they were wrong: It is easier for them to believe in a vast, ridiculous conspiracy than admit to their own fallibilities.

Speaking of coincidences, a few years ago I was in Hollywood with a group from the Home Theater Forum. We were being given a private tour of Paramount Studios and we broke up into small groups. Each group was assigned to a studio page who showed us around. My group’s page was a young woman who probably was no older than 25. During the course of the tour she asked us where we were from. When I told her that I live in New York, she said, “Oh, I grew up in New York.” When I asked her where, she replied “Pound Ridge,” which is 9 miles from where I grew up. Then I asked her where she went to high school and she said “John Jay High,” which is the same high school I went to 45 years earlier.

During those 45 years the school graduated roughly 7,000 students, yet two of us randomly met nearly 3,000 miles from home. Yes, birthers, coincidences do happen.

I think we had this conversation before, I was also born and raised in Westchester County: New Rochelle Hospital, graduated from Pelham Memorial High School. I did Boy Scout activities in Pound Ridge.

I couldn’t agree more. For them to be wrong means Barack Obama was right and they can’t have that. I’m currently debating online with two hard core birthers who were invigorated by the Arpaio/Zullo “press conference,” one on the PDF of the birth certificate being forged and the other on missing INS records for the week of Obama’s birth. Jeez!

I quoted these dudes for them and I’m sure you know that they’re about as right as you can go: In a December 8, 2008, column headlined “Obama Derangement Syndrome,” David Horowitz, editor of the conservative website FrontPage Magazine, blasted “continuing efforts of a fringe group of conservatives to deny Obama his victory and to lay the basis for the claim that he is not a legitimate president” as being “embarrassing and destructive.” He added: “The fact that these efforts are being led by Alan Keyes, a demagogue who lost a Senate election to the then-unknown Obama by 42 points, should be a warning in itself.”

Michael Medved, conservative radio talk-show host, referred to the leadership of the so-called “birther” movement as “crazy, nutburgers, demagogues, money-hungry, exploitative, irresponsible, filthy conservative imposters” who are “the worst enemy of the conservative movement.” According to a Politico article, Medved stated: “It makes us look weird. It makes us look crazy. It makes us look demented. It makes us look sick, troubled, and not suitable for civilized company.”

Pound Ridge Reservation, no doubt. That’s a beautiful area.

I live in Dutchess County now but as an adult I’ve also lived in Connecticut, Arizona, California and Colorado.

And me, Richmond, Virginia, Fargo, North Dakota, Minneapolis and the last twenty-nine years in San Diego.

You’re bringing back memories of family picnics at Croton Point and Indian Point where the nuclear power plant is now and Rye Playland Amusement Park!

No, no, Mr. Check. The geniuses over at sci.physics.relativity have already proven that Einstein’s theories are all wrong. In fact, so obviously and stupidly wrong that only a giant conspiracy could possibly sustain them.

Studying fringe thinking has been a hobby of mine for decades. Birtherism was great fun. It had all the classic elements, plus the internet fanning the flames. Alas, we knew from the start that it was a crank theory with a time limit, if only because of the Twenty-Second Amendment. It’s winding down, but I expect the new administration to bring out many fascinating new specimens for kookologists.

Or maybe I should follow the Doctor’s example with Habitat and go do something productive with my time. Nah.

My favourite example is a case of the German lottery having the same numbers as the Dutch lottery a week before, resulting in 300+ winners (who thought they were the only one using the same numbers as from the Netherlands the week before). Instead of millions, each winner only got around 30,000 DM.

An even more enlightening example is this:

If the probability that another person leaves the same DNA traces as the defendant is 1 in 1 billion, it doesn’t mean that the chance he’s not guilty is 1 in 1 billion but that there are in fact 6 people in the world who would’ve left the same traces.

So the chance the defendant is not guilty is 6:7.

(One could factor in the question how probable it is any of these persons lives next door. I once saw a documentary about dopplegangers, and that people who almost lived next door looked like twins.)

Rickey: Yes, birthers, coincidences do happen.

You and I are neighbors.

My neighbors and I defeated the great “deal maker” Trump twice:

Once when he wanted to construct a golf course on his property in our neighborhood. He even offered a few of us a free membership if we voted “yes”. We voted NO. His attorney was Judge Jeanine’s hubby, Al Pirro.

The second time was when Trump allowed his buddy, Gaddafi, to pitch a tent on that same property and lied about it. We forced him to remove it.

“According to four U.S. and Libyan sources, Trump sought to use the opportunity to gain access to Qaddafi, who was in a position to release billions in investment capital”

https://www.buzzfeed.com/danielwagner/how-trump-tried-to-get-qaddafis-cash?utm_term=.jvy5zwBeO#.wo2ERVJYD

The most interesting thought – Could Obama have cleared the Birther Conspiracy Theory up, and contributed 50 Million to his favorite charity? In the video is a reminder Obama was offered 50 Million to his choice of Charity?

Not for marks, but a disclosure of congruent and multiple sources of identification and original birth place.

Could Trump have won the U.S. Presidency if he had been forced to pay a Charity of Obama’s Choice 50 Million Dollars? Can we then say Obama had made an agreement that Trump was to be President?

Rewarding Forgery Awarding Fraud

https://www.youtube.com/watch?v=ANqhpkkVH8s

Only an idiot such as ex-con Cody would believe that Trump would have actually paid. (And only a narcissist such as ex-con Judy would consider that to be “most interesting” or even a “thought.”)

And given birthers’ unblemished record of ignoring all evidence, only an idiot would believe that birthers would ever be satisfied by anything that proved they were wrong.

Only someone as delusional as ex-con Judy would come to such a ridiculous conclusion.

Seems SpongeBob is the delusional type in writing a Conclusion is marked with a Question mark.

Slow down there square pants let’s ask ourselves how many black ravens would have put it to Trump benefiting our favorite Charity 50M?

So ex-con Judy admits that not even he would answer his own ridiculous question in the affirmative.

As Trump was never going to pay, the answer is zero. Which is why everyone (except idiots like ex-con Judy) paid no mind to Trump’s publicity stunt.

Under the current administration, the President is not for sale.

That will change soon enough.

Trump doesn’t pay his contractors, so why would he pony up $5 million (he never said anything about $50 million) for charity? He had to be publicly shamed before he paid the money he promised to veterans groups.

he also said it would have to be to his satisfaction, so all he would have had to say was he was not satisfied and welched on the offer…..which he would have done.

Just as Farah welched on his promise to make a donation to Kapiʻolani Medical Center if Obama’s LFBC was released.

I knew you did not watch the video all the way

Rewarding Forgery Awarding Fraud Trump says 50M You only ignore such a Public Bet if your guilty of Identification fraud , , and don’t want to put a sock in a big mouth, don’t want HRC to win, or really hate Charities. Obama has one or two of those excuses.

https://youtu.be/ANqhpkkVH8s

The Media would have had more fun with that then they did with Muammar Gaddafi’s Trump Tent

Excuse me, you caught me thinking like a Politician in Public Debates. I can’t think of anything better in a public debate than having Trump a Billionaire owe me 50M to my favorite Charity and him not being able to pay.

A bet like that every sports fan in America would be expected to pay. Of course you guys probably don’t see things like common folks do on account of your elitist educations and pajorative labeling habits.

Don’t you ever get tired of taking Obama’s side? You’d defend his brand of toilet wipe you’re a good Kool-Aide drinker, I’ll give you that but GroupThink is a faulty young weakness and you know it.

https://en.m.wikipedia.org/wiki/Groupthink

I think Obama would be a great sports friend and he’s a great Dad and Husband. I simply don’t believe he’s legal in the Office of President.

It’s precisely Obama’s penchant for competition in Sports, golf, basketball that is obviously a trait that exposes him from taking Trump’s 50M bet for Charity.. simply for producing what he refused to. . And doing it in public.

Of course: The only person watching ex-con Judy’s vanity videos is … ex-con Judy.

Reality is not as constrained as ex-con Judy’s imagination: President Obama ignored an obvious conman seeking publicity. As only an idiot like ex-con Judy would think Trump would have paid.

Ex-con Judy certainly isn’t thinking like the president, who had more important things to do than wrestle in the mud with a pig.

Trump habitually lies, yet sports fans (and others) still listen to him.

Many people disagree with President Obama about many things. But ex-con Judy wouldn’t know about those disagreements because he is interested in talking only about himself.

Because ex-con Judy believes in lies, and not reality.

President Obama had already — vountarily — produced sufficient evidnece of his natural-born citizenship. And he was smart to know that there’s simply no way to satisfy the unsatisfiable.

—

Well that’s mighty white of ya.

Correction: I didn’t watch your video at all.

Barack Obama does not have ownership of his original vault copy birth certificate. He is only entitled to receive copies of that document, which he did receive and post to the Internet for the whole wide world to see.

Therefore the bet was always an impossibility. The state of Hawaii owns and controls the original vital record and the state would need to change its laws in order to make the bet possible.

In spite of not talking up the birth certificate challenge, Barack Obama is leaving office as a well-liked, well thought of president with a 60% job approval rating and a 61% personal favorability rating. He only received 51% of the vote for reelection.

ABC News/Washington Post Poll. Jan. 12-15, 2017. N=1,005 adults nationwide. Margin of error ± 3.5.

“Overall, do you have a favorable or unfavorable impression of Barack Obama?” 9/08 & 10/08: “Regardless of how you might vote, do you have a . . . .”

Favorable: 61%

Unfavorable: 36%

Unsure: 4%

1/12-15/17

http://www.pollingreport.com/obama_fav.htm

Judy has obviously never read David Fahrenhold’s many articles about how Trump stiffed contractors and charities out of money he promised them.

I’ve always wondered how the Congress can claim to not be a ‘rubberstamp’ for Trump when they don’t have any leverage. He wants to run the country like he would one of his businesses and thinks going bankrupt is good business strategy. What good would it do Congress to threaten to shut down the Government and/or default on the national debt?

This article has been updated with a popular example that illustrates the fallacy better.

What are the chances that Orly Taitz and I would be rear-ended by a truck on the SAME DAY. It happened. I’ve been driving for 50 years, and have only been rear-ended by a truck twice. You can do the arithmetic. I don’t know about Orly.

In high school I had a classmate who was born on the same day as me, and also in the same hospital as me.

3 other classmates, same date, same hospital for me.

I think these coincidences are manifestations of the birthday paradox or birthday problem in statistics. It goes something like this. Take any group of 23 people. The odds of any two people having the same birthday are 50-50. If you increase the size of the group to 75 the odds are over 99.9% that at least two will have the same birthday. It seems counter intuitive but it is true even though the probability of another person having the same birthday as you is 1/365 (ignoring leap babies born on February 29).

Life expectancy statistics also are very interesting. According to the latest data, I should live to be 83 1/2. However, if I make it to 83 my chance of dying during that year will be only about 8% and I should live to be 89 1/2. If I make it to 89, the statistics say that I should live to be 93. And so on.

This is the birthday paradox at work. The fact that they were classmates populates the group with people born the same year.

I had added that example to the article.

When I was working, I did record matching on large databases, including the statewide immunization registry in Tennessee. I was surprised at the time how frequent it was that two or more distinct individuals were born on the same date with identical names.

In a small database, say 100,000 records you don’t see many duplicate name/dob pairs, but when the database goes into millions like with vital records or immunization registries, they are common.

When we do voter registration lookups (within a county), we typically type in the first few characters of the last name and first initial. It almost always gives a unique result, and about the only time it fails is when you’re dealing with JR/SR.

Record matching in large databases is just something that most people lack experience with, and their intuition leads them astray. I guess the same could be said for any large number problem. That’s when the mathematical discipline should take over.

Sample size is very important.

Years ago a man I knew, who was in the life insurance business, visited a corporation in Phoenix which was self-insured for workers’ compensation (meaning that the corporation handled and paid its own claims, rather than buying insurance). The corporation had about two dozen employees who were permanently disabled and were going to receive payments for the rest of their lives. The insurance man was trying to convince the corporation that it made sense for it to buy annuities to make those permanent disability payments. He asked the head of the company’s claims department how they calculated how much money they set aside for future payments. He replied that they looked at the life expectancy tables and set their reserves accordingly.

The insurance man explained to the claims manager that they were taking a terrible risk of becoming underfunded down the road because their sample size, two dozen, was too small to be able to make reliable assumptions about life expectancy. What if all 24 of them lived ten years beyond what the mortality rates showed?

The life insurance companies, on the other hand, because they have a huge sample size of policyholders, can rely on the mortality tables. In a sample size of one million, on average for every person who outlives his or her life expectancy there is another who dies much younger. In a sample size of two dozen, it is far more difficult to predict how long they will live.

My wife does interviews for the Bureau of Statistics (did you work last week, did you want to work more hours, etc, etc). Some people cooperate nicely, some people are pr1ks about it.

After one special purpose questionnaire, the office bound admins asked her how come her participation results were above average. I suggested she tell them to ask the Chief Statistician what an average was.

Yes, that’s correct. I have been to a number of reliability engineering classes and the human body follows a reliability curve (or mortality curve of you prefer) like most machines and electronic components known as the “bathtub curve”.

At birth the death rate is higher due to infants born with defects and vulnerability to diseases (infant mortality). Then it decreases to a low but gradually rising level through childhood into middle age. At around late middle age the mortality rate enters the wear out phase and rises dramatically; the older you are the steeper part of the curve you reside.

The value of the curve at each point represents the chances of death in a year or given particular period of time. None of us ever gets out of the tub alive.

your analysis is bogus. They were saying 29 straight passes or wins. That is a one to one basis. You might cast the dice 10 times or more before you win or lose, the side betters might to do better.

I got that from a probability textbook. Go away. You have nothing to offer.

But Sir, I disagree. trader jack does have something to offer.

A living example of Dunning-Kruger Effect.

Someone might get the impression that Doc. C is not fond of Trader Jacques.

Well, that’s a great thing about Dr. C.

Many other web site proprietors would just ban tj and be done with him.